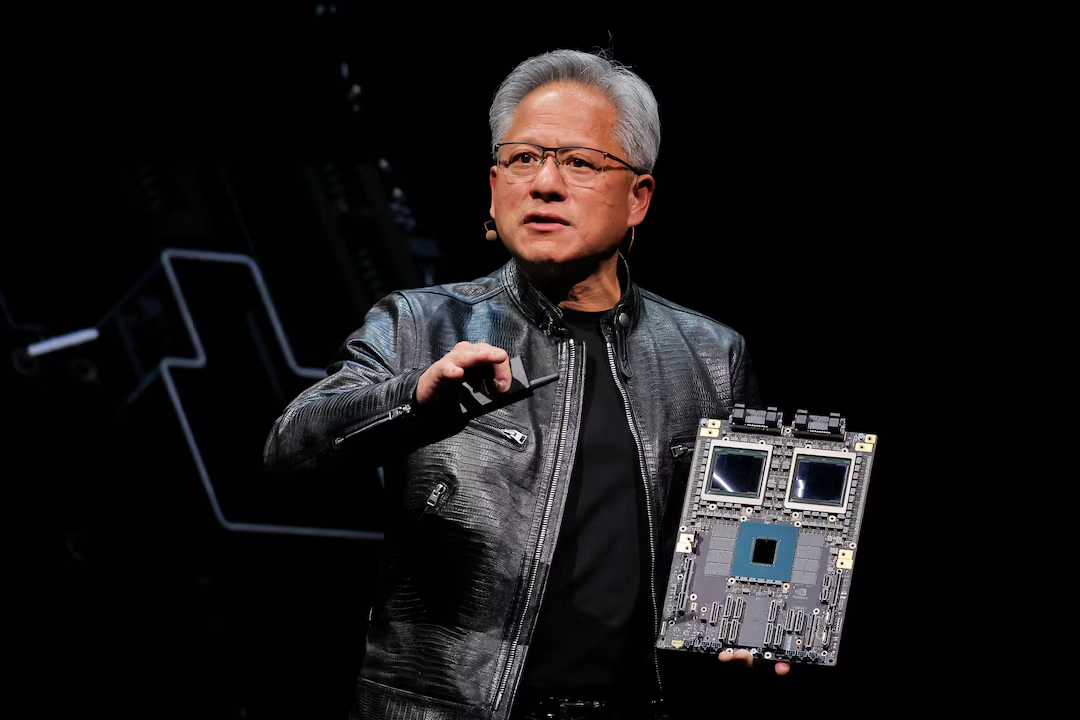

South Korea manufactures more industrial robots per manufacturing worker than almost any nation on earth. It has, for years, been the laboratory where the theoretical promise of automated manufacturing meets the unforgiving reality of high-precision, high-volume production. So when Madison Huang — Nvidia's senior director of product marketing for physical AI platforms, and as it happens, the eldest daughter of CEO Jensen Huang — flew to Seoul on April 28, 2026 and spent two days meeting the leadership of Samsung, SK Hynix, LG Electronics, Hyundai Motor, and Doosan Robotics in rapid succession, it wasn't a courtesy tour. It was a sales call on the industrial world's most demanding customers.

The Doosan meeting is the one that produced concrete commitments. Huang toured Doosan Robotics' innovation centre in Seongnam, Gyeonggi Province, where she met with CEO Kim Min-pyo. The discussions focused on integrating Doosan's Agentic Robot Operating System with Nvidia's simulation tools and AI training frameworks. What emerged is a partnership with specific deliverables and a specific timeline: intelligent robot solutions powered by the Agentic Operating System in 2027, followed by the launch of industrial humanoid robots in 2028. WeCovrWeCovr

Two years. Industrial humanoids. On factory floors. That's not a research horizon — it's a product roadmap.

What Doosan's "Agentic OS" Actually Is

Before unpacking why this matters, it's worth being precise about what Doosan's Agentic Robot Operating System is — because the press release language obscures something genuinely interesting.

Traditional industrial cobots are pre-programmed. You tell the arm to pick up a box from coordinate A and place it at coordinate B, and it does exactly that, repeatedly, without deviation. Change the box size, shift the conveyor, put an obstacle in the path — the robot stops or fails. The limitation isn't mechanical. It's cognitive. The robot has no model of the world, only a script.

Doosan's natural-language-driven depalletizing solution, developed with Nvidia, allows robots to be programmed using simple words and text — integrating 3D vision-based box recognition, text recognition, obstacle avoidance and automatic path generation. The agentic operating system extends that logic: a robot that can perceive its environment, interpret spoken or written instructions, and generate its own motion paths to accomplish a task is categorically different from one executing a fixed program. It's the difference between a tool and an agent. The platform enables robots to perceive their surroundings, optimise movement paths and perform high-precision tasks. Fortune Business InsightsWeCovr

Nvidia's role is the intelligence layer. Doosan Robotics' palletising work uses Nvidia Cosmos Reason — one of Nvidia's world foundation models that allows robots to reason about physical environments before acting in them. Combined with Nvidia Isaac Sim and cuRobo for sim-to-real transfer, the stack lets Doosan train robot behaviours in virtual environments and deploy them to physical cobots with dramatically reduced real-world trial-and-error. The promise: a robot that learns a task in simulation, then does it correctly in a factory on day one. AonMordor Intelligence

That's the technical picture. The commercial picture is bigger.

"The success of physical AI depends not only on the intelligence of AI models, but also on the stability of execution platforms that can operate without error in real-world environments. Based on today's discussions, we will push forward the commercialisation of intelligent robotic solutions and industrial humanoids by combining Doosan's hardware manufacturing capabilities with Nvidia's software ecosystem."

— Kim Min-pyo, CEO, Doosan Robotics

Nvidia's Industrial Land Grab — and Where Doosan Fits

Nvidia unveiled new physical AI infrastructure at GTC on March 16, 2026 — Cosmos world models, Isaac simulation frameworks, and the GR00T N foundation models for humanoid robots. Physical AI leaders including ABB Robotics, FANUC, KUKA, Universal Robots and YASKAWA are all building on Nvidia technology to develop and deploy physical AI at scale. That's a who's-who of industrial automation. Every major robot arm manufacturer in the world is now, in some capacity, building on Nvidia's software infrastructure. GlobalData

The question is whether that means Nvidia has won the industrial AI race or simply entered it. There's a meaningful difference.

The global industrial robotics market is expected to reach $95 billion by 2030, growing at roughly 11% annually. Physical AI — robots that reason, adapt and interact with unstructured environments — represents the next layer of value on top of that hardware market. Nvidia's bet is that it captures that layer the same way it captured the AI training market: by making its computing infrastructure so deeply embedded in the development workflow that it becomes the default. Isaac Sim for training. Omniverse for digital twins. Jetson Thor for edge compute. GR00T N for robot reasoning. Each product creates dependency. Together, they create a platform.

Doosan is not ABB or FANUC. It's a South Korean cobot specialist founded as part of the 130-year-old Doosan conglomerate, listed on the Korea Stock Exchange, with a specific focus on collaborative robots designed to work alongside humans rather than replace them. One of the major advantages of cobots is their minimal footprint and rapid deployment. Unlike traditional industrial robots, which require dedicated, permanent installation spaces and full caging for operator safety, cobots can be deployed directly alongside human workers, often with very short lead times from installation to operation. Fortune Business Insights

That distinction matters. The use case for Doosan's AI-enhanced cobots isn't the fully automated factory of science fiction. It's the real factory floor of 2026, where labour shortages are acute, where most processes still need human judgment, and where a cobot that can handle the physically demanding or repetitive tasks — palletising, depalletising, quality inspection — while a human handles the exceptions is more commercially viable than a fully autonomous system that costs three times as much and requires factory redesign to deploy.

A Doosan P3020 cobot arm can move 60 lbs at a rate of seven picks per minute — around 25,000 pounds of product per hour. That's the value proposition right now: replacing the most physically demanding work, at a price point that mid-sized manufacturers can actually afford, with deployment timelines measured in days rather than months. Fortune Business Insights

Korea's position in this story isn't incidental. The country's robot density — 1,012 robots per 10,000 manufacturing workers as of the most recent IFR data, the highest globally — means Korean manufacturers have more experience integrating robotic systems into production environments than almost anyone. They've also lived through the limitations of the current generation of industrial robots: rigid, expensive to reprogram, unable to handle variation. When Nvidia's Madison Huang visits Seoul and meets six major industrial conglomerates in 48 hours, she's targeting the customers most likely to understand exactly why adaptive, AI-driven robots are worth paying for — and most capable of deploying them at scale once they work. The Korean market isn't a test bed. It's a proving ground.

The 2028 Humanoid Target — Ambitious or Aggressive?

Doosan Robotics and Nvidia are also considering developing key components including robot-AI interfaces, standardised control protocols, task-specific models and safety control guardrails, with the pair planning to showcase joint achievements at CES 2027. WeCovr

Industrial humanoids by 2028 is a specific claim that deserves scrutiny. Humanoid robots — bipedal, dexterous, capable of operating in human-designed environments — are the frontier that every serious robotics company is racing toward. Boston Dynamics, Figure, Agility, AGIBOT, NEURA Robotics: all are working on the same category, all with slightly different timelines and form factors. NEURA Robotics launched a Porsche-designed Gen 3 humanoid at GTC 2026, while Figure, Agility and Boston Dynamics have all integrated Nvidia's Jetson Thor into existing humanoids. Wrisk

The difference with the Doosan-Nvidia approach is the emphasis on the execution platform, not just the hardware. Kim Min-pyo's quote about "stability of execution platforms that can operate without error in real-world environments" is doing real work there. Most humanoid failures in real-world deployments aren't hardware failures — they're software failures. The robot perceives a situation, makes a wrong inference, takes a wrong action. Building the operating system layer that prevents that in industrial contexts — where the cost of an error is a damaged part, an injured worker, or a halted production line — is harder than building the robot itself.

Here's the contrarian read: the 2028 industrial humanoid target may be less about shipping a product and more about signalling. Jensen Huang called March 2026 "the ChatGPT moment for robotics" at GTC. Every serious robotics company needs to be on the physical AI roadmap right now, or risk being perceived as legacy hardware. The partnership announcement, the CES 2027 showcase plan, the 2028 humanoid target — these are as much investor narrative as product commitments. That doesn't make them false. It means the distance between roadmap and reality deserves watching.

What's actually being built — a numbers snapshot:

$95 billion — projected global industrial robotics market by 2030 (IFR)

1,012 robots per 10,000 workers — South Korea's robot density, highest globally

2 days — standard Doosan cobot deployment time from installation to operation

25,000 lbs/hour — throughput of a Doosan P3020 cobot on palletising tasks

2027 — target date for joint Nvidia-Doosan Agentic OS robot solutions

2028 — target date for commercial industrial humanoid robots under the partnership

What Founders Should Actually Do With This

If you're building software for manufacturing, logistics, or supply chain — or if you're an operator running a facility with repetitive, high-volume physical tasks — three things are worth watching.

First, the Agentic OS as a deployment accelerator. Natural-language robot programming means the bottleneck shifts from specialised engineers to domain experts. A warehouse manager who understands their workflow but can't write ROS code could, in the Doosan-Nvidia model, instruct a robot the same way they'd instruct a new hire. If that actually works at scale, the addressable market for industrial automation expands dramatically — because cost and expertise barriers drop simultaneously.

Second, sim-to-real transfer as the reliability unlock.Nvidia Isaac Sim and Doosan's integration of it means robots can be trained on millions of virtual scenarios before touching a real factory floor. For manufacturers worried about deploying unproven technology on live production lines, this matters. A robot that's been trained on 10 million simulated depalletising scenarios before its first real pick is a very different risk proposition from one trained on 1,000 real examples.

Third, the Korea-first rollout signals where early adoption will happen. Samsung, SK Hynix, LG, Hyundai — these aren't just Nvidia's Korean sales targets. They're the factories where the first generation of AI industrial robots will actually get tested at volume. South Korea's manufacturing sector will generate the real-world data that trains the next generation of models. The companies that are watching those deployments carefully — tracking what works, what fails, and why — will have a significant advantage when the technology starts crossing into their own industries.

The Nvidia-Doosan partnership isn't a press release. It's a clock starting on a two-year sprint toward industrial humanoids — built by a Korean cobot company and a Californian GPU giant, validated in the most robot-dense manufacturing environment on the planet. Whether the 2028 deadline holds is secondary. What matters is that the infrastructure being assembled around Nvidia's physical AI stack — across Isaac, Cosmos, Omniverse, and now Doosan's Agentic OS — is real, it's funded, and it's moving faster than most factory operators have processed.

The assembly line has been the same basic idea for 120 years. That's about to change. The question isn't whether to pay attention. It's whether you're early enough to shape what comes next.