Ai Deeptech

The Trillion-Dollar Ghost: Anthropic’s $900B Valuation is the Final Stress Test for Private Capital

As the board prepares for a May decision on a $50 billion capital call, Anthropic is no longer just a research lab—it is a sovereign-scale infrastructure play testing the limits of venture math and global compute.

Elon Musk Just Spent Two Days on the Stand Explaining Why OpenAI's $852 Billion IPO Should Be Stopped

There's a courtroom in Oakland, California where the future of artificial intelligence is currently being decided by a nine-person jury that, as of last Monday, had been carefully selected for their neutral opinions about Elon Musk and AI. That detail alone tells you something about how strange and consequential this moment is.

LinkedIn's New CEO Just Disclosed a Number Microsoft Never Has. Here's Why That's the Real Story.

Dan Shapero had been LinkedIn's CEO for approximately one week when Reuters caught up with him on April 29. What he chose to say in that first major public statement is instructive. He didn't talk about product vision. He didn't talk about the company's 1.3 billion members. He talked about a revenue number — a specific, unprecedented one that Microsoft has never disclosed for any LinkedIn product line in the company's history.

Yann LeCun Raised $1 Billion for a Startup That Bets the Entire AI Industry Is Wrong

There's a version of the Yann LeCun story that reads as vanity. A 65-year-old Turing Award winner, restless inside a corporate machine, walks out and immediately starts fundraising at a valuation most Series C companies would envy. There are worse ways to frame it.

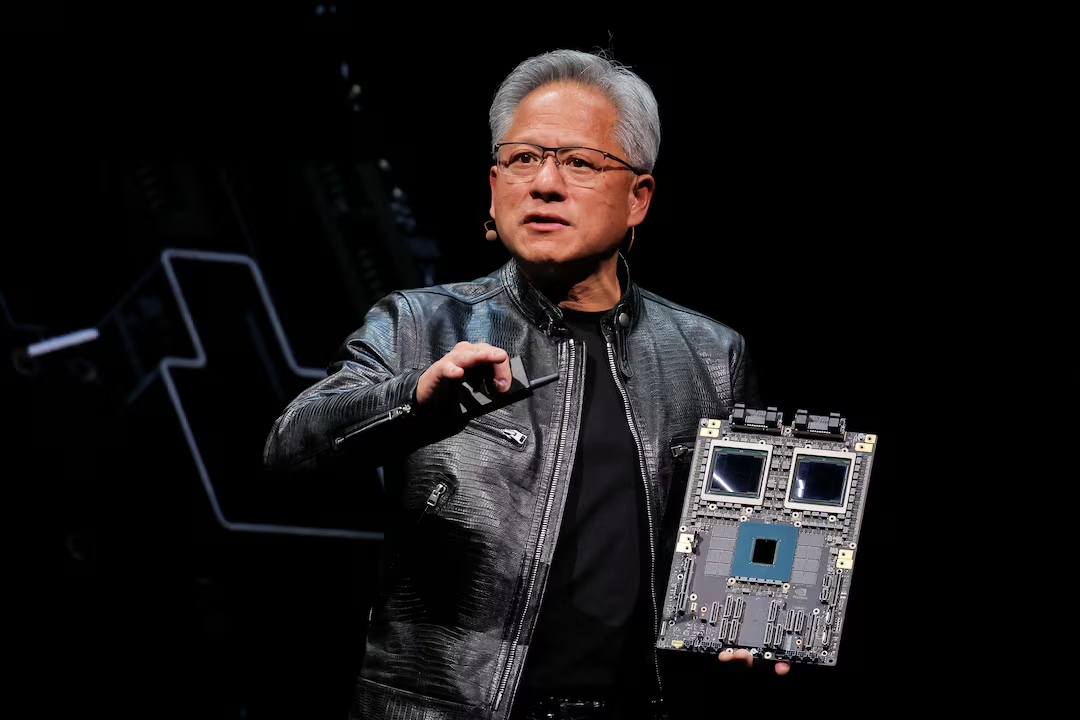

Why Did Jensen Huang Send His Daughter to Negotiate Nvidia's Most Important Industrial Partnership?

South Korea manufactures more industrial robots per manufacturing worker than almost any nation on earth. It has, for years, been the laboratory where the theoretical promise of automated manufacturing meets the unforgiving reality of high-precision, high-volume production. So when Madison Huang — Nvidia's senior director of product marketing for physical AI platforms, and as it happens, the eldest daughter of CEO Jensen Huang — flew to Seoul on April 28, 2026 and spent two days meeting the leadership of Samsung, SK Hynix, LG Electronics, Hyundai Motor, and Doosan Robotics in rapid succession, it wasn't a courtesy tour. It was a sales call on the industrial world's most demanding customers.

The Chip That Sanctions Built: How DeepSeek V4 Turned Huawei Into China's Most Important Tech Company

Washington's export controls were supposed to freeze China out of the AI race. Instead, they handed Huawei a captive market of a billion-dollar scale — and DeepSeek just pulled the trigger.

The $39 Billion Circuit Board Company You've Never Heard Of Is the Clearest Proof the AI Hardware Boom Is Real

Here's a number that puts the AI hardware boom in perspective: Victory Giant Technology's Shenzhen-listed shares gained more than 580% in 2025 alone — making it the top performer in the MSCI Asia Pacific Index. Not a semiconductor company. Not a cloud platform. A printed circuit board manufacturer in Huizhou, Guangdong, founded by a former soldier who learned to sell PCBs at a Taiwanese-owned factory before deciding he could do it better himself.

OpenAI Brings Latest AI Models and Codex to AWS Bedrock

In a major shift in the AI cloud landscape, OpenAI has partnered with Amazon Web Services (AWS) to bring its latest AI models and Codex to Amazon Bedrock. This move expands access to OpenAI’s advanced capabilities beyond its earlier cloud limitations and signals a new phase of competition in enterprise AI.

Google Just Signed the Pentagon's Blank-Cheque AI Deal. The Clause That Matters Is the One Nobody Is Talking About.

Anthropic refused to let Claude be used for fully autonomous weapons or domestic mass surveillance. The Pentagon blacklisted it — the first time in American history a US company had received a supply-chain risk designation not for a security failure, but for declining to accept a government contract clause. OpenAI signed within hours. xAI was already on board. And on April 28, 2026, Google completed the set.

OpenAI Reportedly Misses User and Sales Targets

Growth Expectations Meet Market Reality

India Just Made the World's Cheapest AI Compute Even Cheaper. The Real Test Is What Happens Next.

When Minister Ashwini Vaishnaw said India provides "the cheapest compute facilities in the world" at ₹67 per GPU-hour, he wasn't exaggerating. AWS charges roughly ₹330 per hour for H100 access. Azure sits even higher at approximately ₹590 per hour for comparable compute. The IndiaAI Mission — India's ₹10,372 crore ($1.1 billion) state-backed AI infrastructure programme — has been systematically forcing those numbers down with every successive procurement round since 2024. The fourth round, completed in April 2026, takes the logic further: next-generation Nvidia B200 GPUs at ₹290.7 per hour for a single unit, a 10% cut from the previous benchmark, and a price point that places cutting-edge Blackwell architecture barely above the cost of the older H100s that most Indian developers were previously using.

SK Telecom and NVIDIA Are Building Korea's AI Brain — and the Rest of Asia Is Watching

South Korea has 51 million people, one of the world's highest 5G penetration rates, and a semiconductor industry — anchored by Samsung and SK Hynix — that supplies the memory chips inside nearly every AI system on the planet. It also has a government that decided, early and decisively, that it would not outsource its AI future to Silicon Valley. That political choice created the conditions for what SK Telecom (SKT) and NVIDIA are now building together: a sovereign AI stack so deep, so vertically integrated, and so deliberately designed to outlast any single product cycle that it amounts to a new kind of national infrastructure.

DeepSeek Slashes V4 Pro Prices by 75% — and Western AI Labs Should Be Paying Attention

A 1.6-trillion-parameter model now costs less per token than a cup of coffee per million words. The AI pricing war isn't coming. It's already here.

Hanwha Expands Space Strategy With AI Integration

A Conglomerate’s Strategic Space Bet

South Korea's Hanwha Is Building the World's Most Integrated AI-Space War Machine

The conglomerate that makes howitzers and satellites and autonomous warships is now fusing all three with artificial intelligence. South Korea's strategic bet on space-based AI isn't just a defense play — it's a template for the next generation of tech-industrial power.

Google Is Investing Up to $40 Billion in Its Own Biggest AI Rival — and That Tension Is the Whole Story

Google's landmark Anthropic investment mixes capital, cloud compute, and competitive risk in ways that reveal how the frontier AI race has fundamentally changed who counts as a partner.

An Apology Won't Fix the Decision OpenAI Made Before the Tumbler Ridge Shooting

Sam Altman's letter to Tumbler Ridge is the right gesture, offered far too late — and it sidesteps the structural question that nobody in the AI industry wants to answer.

When an AI-Native Company Lays Off Its Engineers, Something Is Actually Working

The startup said it was built AI-first from day one. Then it fired 30% of its engineers to prove it.

OpenAI Is Collapsing Its Products Into One. GPT-5.5 Is the Foundation.

The company's latest model isn't just a performance upgrade — it's a calculated step toward merging ChatGPT, Codex, and an AI browser into a single unified product that could lock in enterprise customers for years.